Part 1: Dealing with a lack of ethics in digital product design

Introduction

Underpinning all that we do at Spatial is a commitment to bringing a human lens to technology products and services, and a desire to create a future that respects and connects people and operates with integrity.

In this series of articles, we will share the work we’ve been doing to develop a holistic, human-centred and ethical framework for assessing technology products and services.

We created this framework to bring ethical standards into our own research and design practice in a way that is sensible, actionable, and measurable. We believe other product professionals, including researchers, designers, developers and product owners, want to do the same, and together we can raise the bar in terms of best practice in digital product design.

This first article in the series is an introduction to our Human Impact Research Framework and the background as to why we feel it is both necessary and timely.

The back story

In 2016, there was a lot of hype in the tech industry around virtual and augmented reality (VR/AR) and we were exploring how to evaluate and design for these experiences.

There was a lot of focus on the technology and some on physical impacts, but very little about the psychological and sociological implications of wearing a computer on your head. We decided to do some research of our own into evaluation heuristics and protocols for VR/AR. Our intention was to establish a more holistic set of criteria based on usability and impact, against which emerging tech applications could be assessed.

In 2017, we partnered with the UBC Psychology Department’s Behaviour Attention and Reality Lab and were awarded a grant for research into the social dynamics and human impacts of VR/AR (now called XR for ‘extended reality’). We thought these insights could help support better, more ethical product design and development decisions.

And then we had a revelation: it wasn't just emerging tech that needed a different approach to evaluate what ‘good’ looks like, it’s also the technology we’re using right now, every day!

BIG negative impacts

All around us, we see examples of the negative impacts of technology: technology addiction; cyber-security breaches, with stolen data being used in scams, extortion, fraud and identity theft; and our collective personal data being mined, sold and used against us to create fake news designed to intentionally deceive, manipulate, and undermine people, the media, and our democratic system. All with little or no accountability for negative impacts.

How is this ok?

Events in the US and the Black Lives Matter movement have highlighted (again) the systemic discrimination and inequity that exists in North America and around the world. Many governments have laws and/or policies in place that protect equality, but as the term ‘systemic’ implies, bias and discrimination permeate society and it will take an intentional effort to shift.

Can regulation bring ethics into technology?

We are certainly not the only people talking about ethics in technology and that’s heartening. And to be clear, this isn’t just a technology problem. It’s a political, business and morality problem that is going unchecked.

People within all societies behave according to some form of moral code (even though our moral codes can vary widely). And most professions, including research, product design and software engineering, have codes of ethics based on moral principles. However, all too often, when it comes to business decision-making, the pursuit of profit takes precedence over all else and ethics doesn’t get a mention.

That’s why there’s been a need for regulation across almost every single industry, to ensure businesses operate within certain standards that protect people and the environment, define what is culturally acceptable in terms of impact or risk, and establish a level of trust and security within society.

The technology industry, however, is one of the few largely unregulated industries, despite being an intrinsic and essential part of our lives. In the US, there is not even any comprehensive federal legislation around data privacy and personal data protection to prevent the misuse or disclosure of personal data, unlike the European Union’s General Data Protection Regulation, which effectively sets a global standard.

The tech industry would have us believe that Government-imposed regulation will stifle innovation. It might, but every other industry seems to deal with it. The tech industry says that ‘self-regulation’ is sufficient. But having seen technology being used to manipulate elections and erode trust within society, clearly self-regulation is not enough.

Innovative technologies should solve problems, not create more problems. Where products and services have the potential to cause harm, designers and developers have an obligation to mitigate these harms. At the moment, individuals and society pay the price, while technology companies make billions of dollars and become even more powerful.

Personally, I’m in favour of regulation, even if, in the short-term, it only means complying with the GDPR. However, I don’t think it’s the panacea, and I don’t think regulation is enough. Self-regulation is still important because the complexity and pace of technological advancement will always outpace our ability to regulate. And for these advanced technologies, we should expect researchers, designers and developers to follow professional codes of ethics.

How can we make a difference?

Indignation is easy. And galvanizing action to create change is hard. But researchers, designers and engineers love to solve problems and there are a lot of people like us, who believe the technology industry can be an engine of innovation and operate within a moral framework that ensures people aren’t harmed and principles of democracy and human rights are protected.

Idealistic, I know.

For our part, we’ve developed a framework for assessing digital products and services that put ethics at the heart of the design and development process.

Our Human Impact Research Framework

‘The Human Impact Research Framework’ is an approach to evaluating product performance. It evolves current assessment standards focused on usability, to include human impact measures, for a more holistic and ethical perspective of what ‘good’ products should look like. It also extends standard assessment criteria from those suitable for 2D screen interfaces to a broader spectrum of experience design.

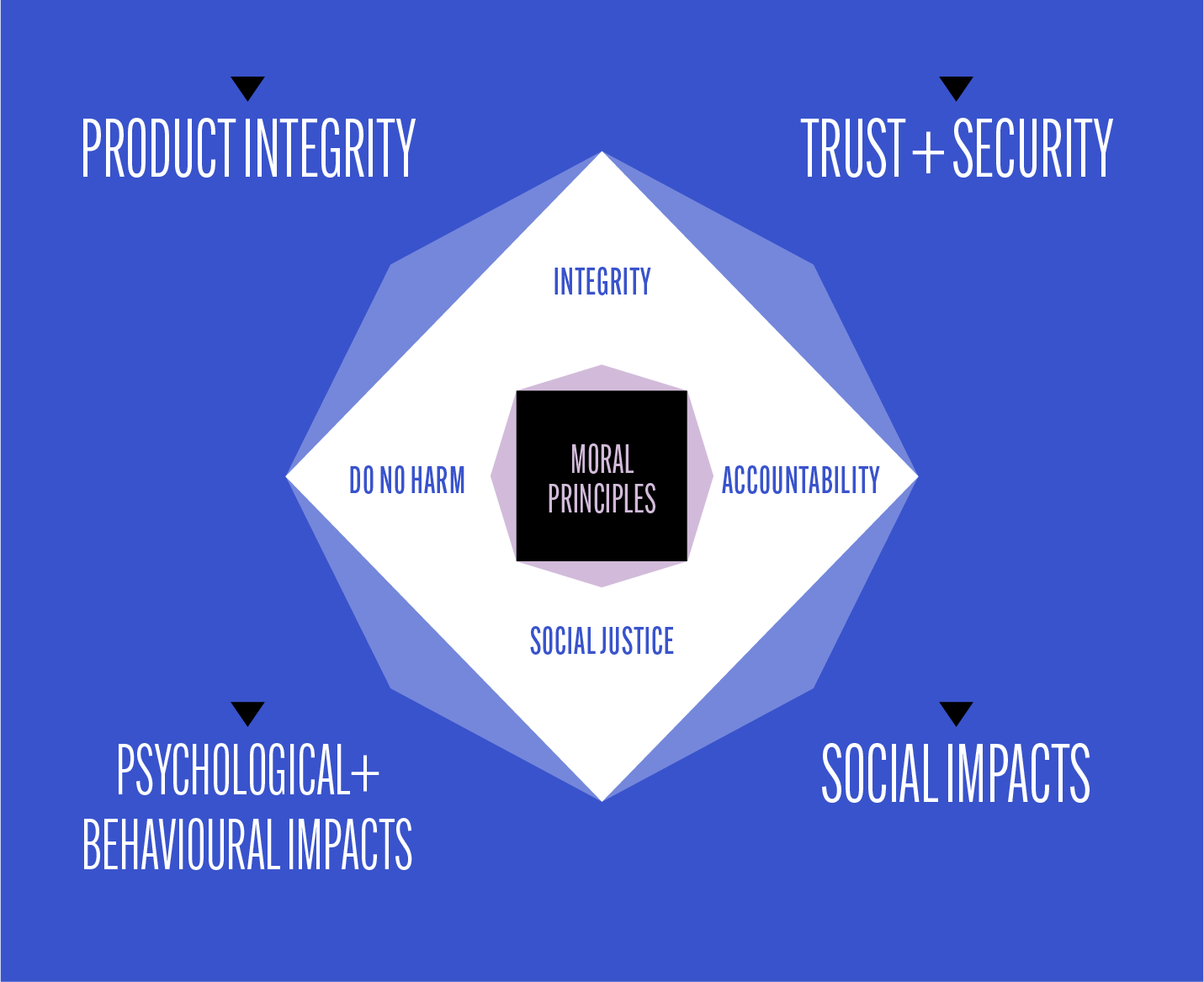

At the centre of our framework are moral principles that are distilled from standard ethical guidelines across research, science, engineering and product design:

- Integrity

- Accountability

- Social justice

- Do no harm

Aligned with these principles, we have 4 categories that give us a more holistic perspective of user experience:

- Product integrity

- Trust and security

- Psychological and behavioural impacts

- Social impacts

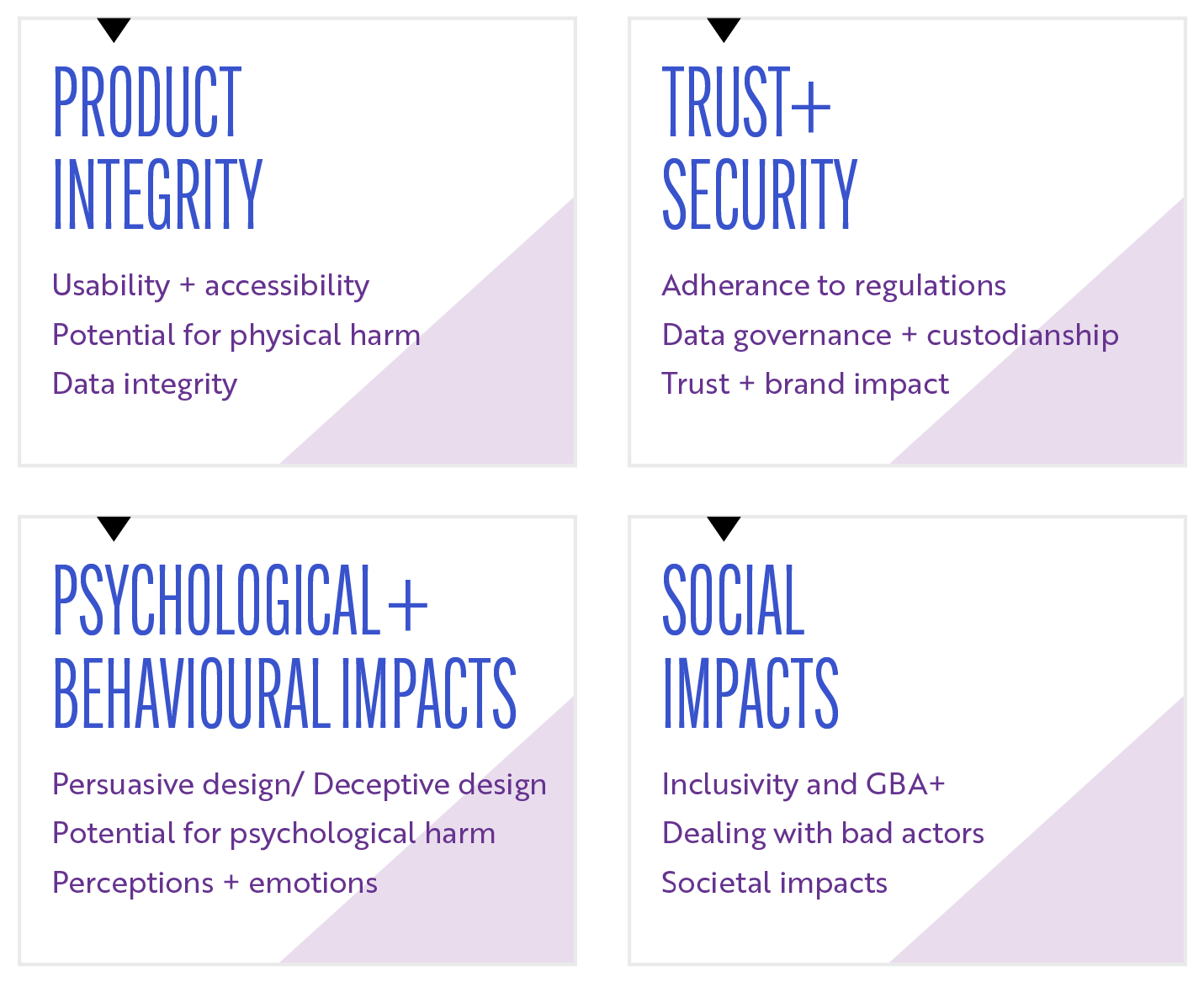

Under each category, we have identified key performance indicators (KPIs) that can be used alone or combined to evaluate product or service performance.

For senior executives, product leaders and managers accountable for brand reputation and product performance, the human impact research framework can be customized to support a program of product optimization and continuous improvement. For designers and developers, it can be a resource for establishing best practice requirements.

In the next article in the series, we’ll go into more detail about each category and explain the KPIs that can be used to assess product performance.